In this blog post, we will look into the generic process of how to generate a machine learning model and understand the importance of every step in the process.

Introduction

Machine Learning enables a computer application to learn on its own and can take the decision to perform the assigned task without any human telling it how to do it. In order to incorporate machine learning capabilities into a computer application, the developer generates a model in order to try and test many different algorithms, tools, and parameters to get the desired result with accuracy following a set of guidelines known as the Machine Learning Model Generation Process.

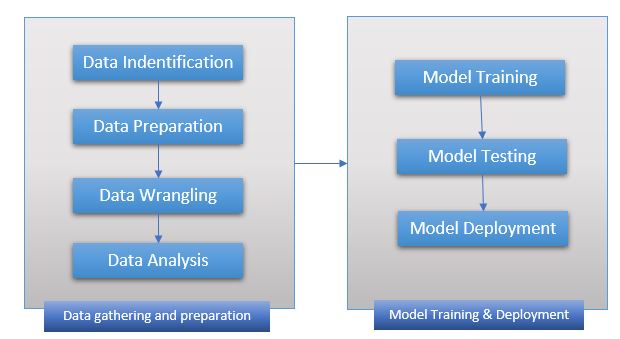

The process flow is shown below, it might differ based on the requirement and the task the model wants to achieve and might contain additional steps or less, here we are going to talk about the most common (generic) approach taken to generate a machine learning model. This process can be applied across various machine learning frameworks.

The process is mostly divided into two major parts, the first is to gather the required data, prepare them by data wrangling (cleaning), perform analysis to check if it fits the requirement. The second step is to use the prepared data and train the model, test and retrain the model until the desired accuracy is achieved and finally deploy the model in real-life application. The details of each step are given below.

Machine Learning Model Generation Process Description

Data Identification

This is the first step in the machine learning model generation, the idea here is to identify the data required to achieve the goal to be accomplished. The step involves identifying different sources of data that will be acquired, merge them if and when required. The quality selection of data is very important as it directly affects the output of the model generated.

Data Preparation

Once the data is collected and acquired as a dataset another important step of Data Preparation begins, here the characteristic of data is put on focus, the format and quality of data are analyzed. All the data acquired is put together as a dataset and randomized, so most parts of it can be used to train the model and the rest of it can be used to test the model.

Data Wrangling (Cleaning)

After the data is prepared, the process of cleaning the dataset begins, this step ensures the required quality of the dataset is prepared. Data is converted into the required format, feature identification and labeling are completed as part of the process. Data having missing values, duplicate values, invalid data is removed from the dataset. This ensures the machine learning algorithm does not recognize noise in the data affecting its outcome.

Data Analysis

Here in this step, the dataset is analyzed using various visualization techniques to find if the data is properly distributed and not biased towards any specific target.

Training

In this step, the dataset prepared from the above-mentioned steps is used to train the algorithm and generate the model. The algorithm finds various patterns in the data by using features and labels defined in the dataset and predicts the outcome for a given input.

Testing

This is one of the most important steps that may give you an idea of whether the trained model gives you the desired outcome. The data from the dataset is used to validate the outcome of the model. Once the desired accuracy is achieved then we move to the last step of deploying the model.

Deployment

The trained model generated is deployed in the real-world system and brought into life. They can be deployed in a number of ways, a few of the popular ways are, deploying as a Micro-Services or as part of the application via code sharing (library).

This concludes the post, I hoped the article helped you in understanding the generic process to generate a machine learning model, thanks for visiting, Cheers!!!.

References: Intro Google Machine Learning | Microsoft ML.NET | Scikit-Learn

[Further Readings: Important Global Visual Studio 2019 Shortcuts | Datasets for Machine Learning | Top 7 Must-Have Visual Studio 2019 Extensions | AI vs ML vs DL – The basic differences | ASP.NET Core Blazor Server Application Project Structure | ASP.NET Core – Blazor Application an Introduction | Top 5 Machine Learning Frameworks to learn in 2020 | Visual Studio 2019 Output Window | Visual Studio 2019 Code Navigation (Ctrl+T) | 10 Basic Machine Learning Terminologies | Introduction to Machine Learning | How to Publish a NET Core application | How to change Visual Studio 2019 Theme ]